Generative AI has transformed how we search for information. Put simply, the front door to the internet has changed for the first time in 25 years. But how do chatbots retrieve information, and how reliable are their responses?

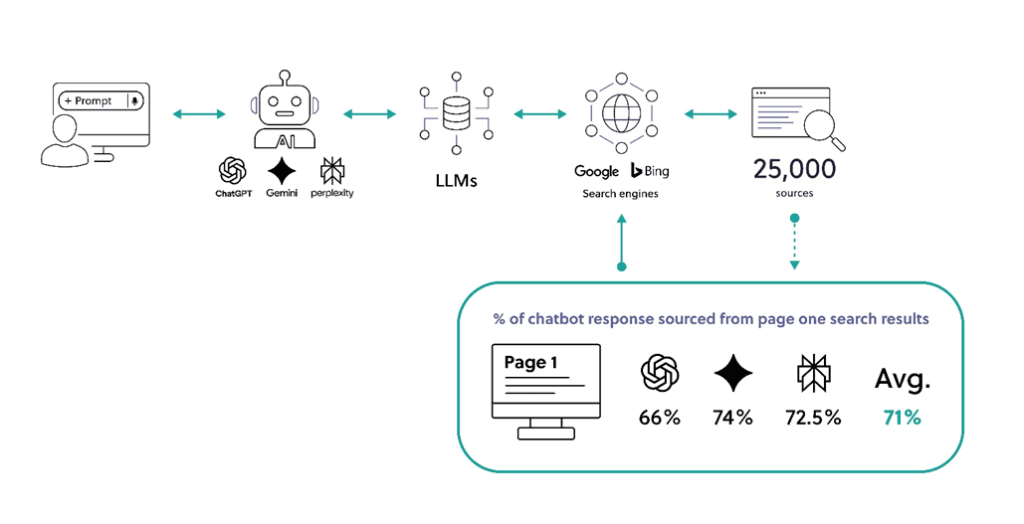

Over the past two years, the Research & Development team at Digitalis has analysed over 25,000 sources that sit behind responses generated by ChatGPT, Gemini, and Perplexity. The results challenge some of the prevailing narratives about generative AI.

Read the full report below:

Generative AI has fundamentally altered the way many of us now look up information online. However, for corporate communications and reputation managers, the rules of engagement have not completely changed.

We tend to trust the information generated by AI chatbots, not least because it is presented back to us coherently, succinctly, and confidently.

But how reliable are these new tools? What types of risks do they pose when people use them for research or to follow vital news agendas? How can one exert narrative control within an AI-mediated system?

Chatbots look and feel novel in how they present information to us. But the method by which they retrieve, prioritise, and then serve emergent information back to us remains in large part reliant on traditional online search algorithms.

Prominence in the search index – usually Google and/or Bing (whose index sits behind ChatGPT and Perplexity) – is arguably the single most important factor in determining how the leading chatbots synthesise answers for informational queries.

AI chatbots often simply summarise what search engine ranking systems determine is important.

Over the past two years, we have been analysing over 25,000 sources that inform responses generated by ChatGPT, Gemini, and Perplexity.

The results challenge some of the prevailing narratives about generative AI.

‘Hallucinations’ are a well-documented problem with AI retrieval systems. Unsubstantiated responses are more likely to occur when the user inputs a directional prompt (i.e. seeking specified information about a subject) for which there is a lack of pertinent information within the index. As the model is designed to provide an answer, it can conflate information to create one when there is no good source content.

Declarative and contextual source language helps the retrieval system to match more effectively to user intent.

But there is a much more profound problem when assessing the reliability of chatbots as research tools.

Our analysis shows that, for informational queries relating to people and to entities, approximately two-thirds of the information generated by these models is retrieved from sources that sit across the first pages of the searched indices. Chatbots offer no new powers of discernment that would enable them to generate novel responses. They are merely summarising and reinforcing whatever already ranks prominently in online search, usually for a series of related searches (or ‘query fan-outs’).

This matters for privacy and reputation management because the leading online search algorithms have been engineered over time to surface information that engages users – measured in clicks, shares, and dwell-time – rather than information that is the most accurate, reliable, or contemporary.

As we are all now too aware, this obsession with showing users ‘engaging’ information is what drives salacious and sensationalist content on the internet and what unfortunately reinforces societal prejudices, polarisation, and diminished trust in our institutions. Content engines know only too well that information triggering negative emotional responses transmits further and faster.

Today this problem is compounded because researchers tend to act upon information leads in chatbot responses more readily than leads generated from traditional search.

For business leaders, investors, and other public-facing economic actors, this built-in negative bias presents vital real-world challenges. If your organisation’s first page of search results is thin on detail, outdated, or otherwise uncontrolled, AI does not – and cannot – correct for that without conflating or hallucinating.

Even when chatbot responses are generated purely from training data rather than live online results (which is rare in the context of prompts pertaining to living people or businesses that are currently in operation), it was the prominence of information within the relevant search indices at the point that data was cached that steers the response. Next time OpenAI, Anthropic, and other AI firms re-train their models (every few months), the prioritisation of information within the index will influence the AI model’s ‘new norms’ following that cache.

First, keep a regular watch on what the popular chatbots are saying about you, your business, or any of its specific interests. If you are not happy with what they are conveying to the outside world, consider taking active measures to better shape their responses.

Second, page-one results matter more than ever because they feed directly into the ostensibly more convincing AI-mediated responses. Regularly check your first-page Google and Bing results and, again, consider taking active measures to improve them.

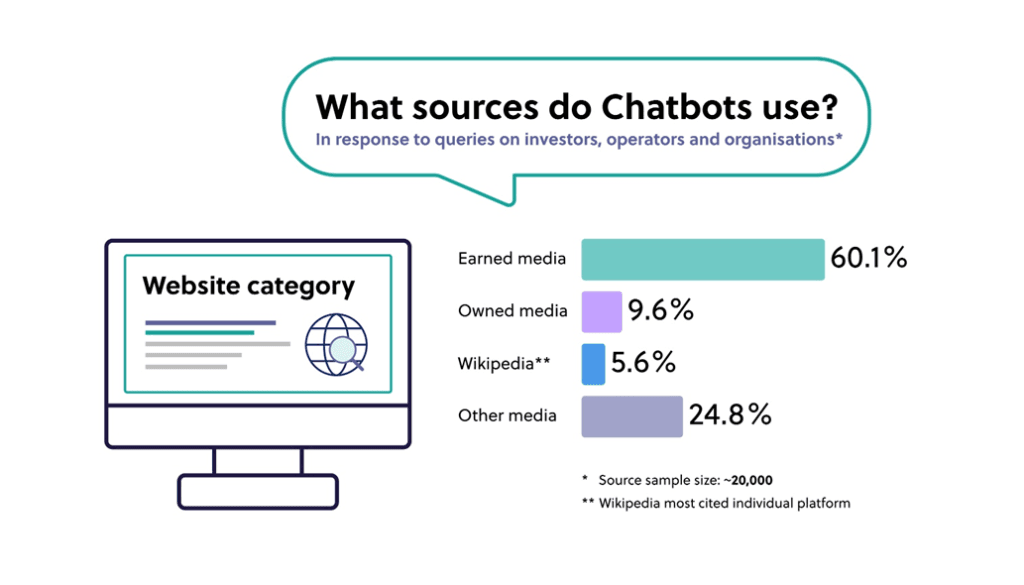

Third, earned media quality matters more than volume. In our research, a small number of authoritative pieces won out against a large amount of controlled content.

And finally, make sure your owned media assets are factually dense, declarative, and contextualised for the in-bound stakeholder queries you care most about.

The influence of AI-mediated research will continue to increase, particularly as younger cohorts move up through the ranks and new agentic AI workflow systems evolve. This new era of AI-mediated content is no more reliable than traditional online search, and indeed potentially carries new and more acute risks to online reputation, privacy, and security.

Additional reporting by Tom Head.

Privacy Policy.

Revoke consent.

© Digitalis Media Ltd. Privacy Policy.

Digitalis

We firmly believe that the internet should be available and accessible to anyone, and are committed to providing a website that is accessible to the widest possible audience, regardless of circumstance and ability.

To fulfill this, we aim to adhere as strictly as possible to the World Wide Web Consortium’s (W3C) Web Content Accessibility Guidelines 2.1 (WCAG 2.1) at the AA level. These guidelines explain how to make web content accessible to people with a wide array of disabilities. Complying with those guidelines helps us ensure that the website is accessible to all people: blind people, people with motor impairments, visual impairment, cognitive disabilities, and more.

This website utilizes various technologies that are meant to make it as accessible as possible at all times. We utilize an accessibility interface that allows persons with specific disabilities to adjust the website’s UI (user interface) and design it to their personal needs.

Additionally, the website utilizes an AI-based application that runs in the background and optimizes its accessibility level constantly. This application remediates the website’s HTML, adapts Its functionality and behavior for screen-readers used by the blind users, and for keyboard functions used by individuals with motor impairments.

If you’ve found a malfunction or have ideas for improvement, we’ll be happy to hear from you. You can reach out to the website’s operators by using the following email webrequests@digitalis.com

Our website implements the ARIA attributes (Accessible Rich Internet Applications) technique, alongside various different behavioral changes, to ensure blind users visiting with screen-readers are able to read, comprehend, and enjoy the website’s functions. As soon as a user with a screen-reader enters your site, they immediately receive a prompt to enter the Screen-Reader Profile so they can browse and operate your site effectively. Here’s how our website covers some of the most important screen-reader requirements, alongside console screenshots of code examples:

Screen-reader optimization: we run a background process that learns the website’s components from top to bottom, to ensure ongoing compliance even when updating the website. In this process, we provide screen-readers with meaningful data using the ARIA set of attributes. For example, we provide accurate form labels; descriptions for actionable icons (social media icons, search icons, cart icons, etc.); validation guidance for form inputs; element roles such as buttons, menus, modal dialogues (popups), and others. Additionally, the background process scans all of the website’s images and provides an accurate and meaningful image-object-recognition-based description as an ALT (alternate text) tag for images that are not described. It will also extract texts that are embedded within the image, using an OCR (optical character recognition) technology. To turn on screen-reader adjustments at any time, users need only to press the Alt+1 keyboard combination. Screen-reader users also get automatic announcements to turn the Screen-reader mode on as soon as they enter the website.

These adjustments are compatible with all popular screen readers, including JAWS and NVDA.

Keyboard navigation optimization: The background process also adjusts the website’s HTML, and adds various behaviors using JavaScript code to make the website operable by the keyboard. This includes the ability to navigate the website using the Tab and Shift+Tab keys, operate dropdowns with the arrow keys, close them with Esc, trigger buttons and links using the Enter key, navigate between radio and checkbox elements using the arrow keys, and fill them in with the Spacebar or Enter key.Additionally, keyboard users will find quick-navigation and content-skip menus, available at any time by clicking Alt+1, or as the first elements of the site while navigating with the keyboard. The background process also handles triggered popups by moving the keyboard focus towards them as soon as they appear, and not allow the focus drift outside of it.

Users can also use shortcuts such as “M” (menus), “H” (headings), “F” (forms), “B” (buttons), and “G” (graphics) to jump to specific elements.

We aim to support the widest array of browsers and assistive technologies as possible, so our users can choose the best fitting tools for them, with as few limitations as possible. Therefore, we have worked very hard to be able to support all major systems that comprise over 95% of the user market share including Google Chrome, Mozilla Firefox, Apple Safari, Opera and Microsoft Edge, JAWS and NVDA (screen readers), both for Windows and for MAC users.

Despite our very best efforts to allow anybody to adjust the website to their needs, there may still be pages or sections that are not fully accessible, are in the process of becoming accessible, or are lacking an adequate technological solution to make them accessible. Still, we are continually improving our accessibility, adding, updating and improving its options and features, and developing and adopting new technologies. All this is meant to reach the optimal level of accessibility, following technological advancements. For any assistance, please reach out to webrequests@digitalis.com